Wearable computing is all the rage right now and is poised to explode in the consumer market once a number of high-profile projects go into full production. The bulk of buzz surrounding the technology is focused on entertainment value and consumer usage. But how might wearable computing and data analysis come together to improve our lives? What kind of data can we collect and harness?

While wearable computing as a whole didn’t even make it into the Gartner Emerging Technologies 2012 Hype Cycle, it is obviously up and coming. Let’s take a look at some of the current and up-and-coming offerings. Or if you’d rather get right to the Personal Data Collection portion, click here.

Pebble

Pebble was and is a Kickstarter sensation, smashing through its $100,000 goal and raising a total of $10,266,845. This watch comes in multiple colors and features an e-ink screen for low battery consumption and customization. Owners can select custom watch faces from Pebble and eventually from developers.

![]()

But what is really special about Pebble is the tech behind the scenes. Bluetooth connectivity allows it to interact with your smartphone (iOS and Android) to receive notifications wrist-top. SMS, calendar events, even Twitter mentions and Facebook likes will set your wrist abuzz and the message will pop up. In fact, you can even use it for Grid Control alerts. At the moment apps for the Pebble are scarce, but once they catch up with their production requirements it is expected that the SDK will be released to developers to allow custom watch faces and apps which interact over bluetooth with a phone.

While the Pebble has some great potential as a consumer device and does contain a 3-axis accelerometer, it is not yet fully exploitable for data collection. Pebble is at this time primarily a consumer device, though with the right additions it could one day become a true input/output device on its own. That’s not stopping Pebble though, and it sure as heck isn’t stopping me from wearing my Kickstarter Edition!

Google Glass

Google Glass is a much hyped Minority Report meets Geordi La Forge headset that fits on your face like a pair of glasses. It includes a small screen that acts as a heads up display and various inputs including audio and video. With Google Glass, you can walk around taking pictures and recording anything you see all by saying a few commands. You can send and receive messages, video (including real time for chat), get directions overlaid in your visual view, get pertinent information such as flights or monetary conversions, and more.

It all starts with the words “Ok Glass”.

This level of geekism and ease of audio and video storage has already prompted one PR savvy bar to ban Google Glass preemptively. Google is masterful when it comes to data collection/analysis and a device which people will beg to wear around that captures video, audio, and interactions with a simple phrase is right up their alley.

And to be honest I can’t help but be taken in by it. Granted, most of the data collection it performs will be consumed by individuals and harnessed by Google; this is a monetization model which already works very well for them and social media sites. With the presentation of Google Shoes yesterday at SXSW, there’s a real possibility Google may be going the whole gamut in regards to wearable computing.

MYO Gesture Control Armband

The MYO armband is an up and coming technology that you wear on the forearm, granting you Jedi powers over wireless devices and is a strong contender in the ultimate goal of creating a Spider-Man web shooter. It is purely an input device (think of it as a Wii controller without the controller) which can read muscle activity in the arm and hand, allowing control over a variety of devices.

The MYO armband is an up and coming technology that you wear on the forearm, granting you Jedi powers over wireless devices and is a strong contender in the ultimate goal of creating a Spider-Man web shooter. It is purely an input device (think of it as a Wii controller without the controller) which can read muscle activity in the arm and hand, allowing control over a variety of devices.

With a pair of MYOs on your arms, you can conduct an electronic symphony through arm and finger movements, control wireless quadcopters, flip through slides, or turn devices on and off. It has excellent implications for any wireless technology which requires any form of touch or accelerometer based input. Thanks to its ability to read electrical signals from the muscles, it has a lot of potential for personal data collection (a topic we will cover shortly).

Fitbit, Jawbone UP

I put these together because they’re close competitors and are used for the same purpose. Both of these gadgets are primarily used as advanced pedometers.

I put these together because they’re close competitors and are used for the same purpose. Both of these gadgets are primarily used as advanced pedometers.

The Jawbone UP is a band you wear on your wrist which collects information about how your arms swing. By wearing the UP 24×7 it can keep track of the amount of activity you get, how many steps you walk, how long you stay idle, and even how you sleep at night. All the data it collects is shipped wirelessly to your smartphone where you can view and publish the information.

The Fitbit is the same concept (and in my opinion, a better device) which you wear on your pants or in your pocket when awake and tucked into a wristband when you sleep. They also have an upcoming device called the Flex which will be purely for the wrist and is a direct competitor to the UP. Fitbit can record your steps, activity level, and the amount of stairs you climb throughout the day which is sent wirelessly to your phone for interaction.

The Fitbit is the same concept (and in my opinion, a better device) which you wear on your pants or in your pocket when awake and tucked into a wristband when you sleep. They also have an upcoming device called the Flex which will be purely for the wrist and is a direct competitor to the UP. Fitbit can record your steps, activity level, and the amount of stairs you climb throughout the day which is sent wirelessly to your phone for interaction.

Both of these devices have a cool feature in common: their phone and web based tools allow you not only to see the data, but to correlate it with other activities. You are encouraged to enter the foods you ate or the mood you were in during certain times so you can view analytics on how the quality of your sleep, the food you eat, and your activity levels play on your day to day life. Both devices are pure sensors, but the data they collect is used primarily for helping you understand more about your activity levels and how you can improve.

Personal Data Collection

Personal Data Collection (PDC for short from this point on) is not a new concept; in fact it has been going on since people started writing diaries and journals. But with wearable computing, both its ease of collection and its benefits can increase exponentially. Part of the problem with forecasting or correlating events in the lives of a person is that you have to rely on that person to keep accurate records in regards to both activities and time. And often, there are details that are simply impossible for a person to measure or record, leading to missing data.

But what if we could capture most, if not all, the personal data regarding the daily use of the human?

The problem is the sensors. While we can put glass on a person’s face, bands on their forearms, and shoes on their feet we are still missing a wide variety of data that comes from the brain and other organs. Of course it would be completely invasive to put a chip in everyone’s brain, gut, and kidneys just go grab some data, but boy would it be enlightening. In the case of organs, perhaps X PRIZE has it right and we should be looking at external collection methods like their joint venture with Qualcomm challenging teams to make a working medical tricorder a la Star Trek for a $10 million prize.

Just with the devices I listed above, you can collect the following information individually:

- Steps walked, stairs climbed, whether the person limps or slows after a certain time

- Sleep movement, wakefulness, amount of time it takes to fall into deep sleep

- Downward pressure of each step, foot angle, general posture, walk type, activity level

- Arm movement frequency, finger movement frequency, limb dexterity, muscle group usage, muscle density, flexibility, frequent arm muscle group usage

- Voice data, talking speed, language, dialect, social interaction methods and frequency, aural and visual interactions with people and objects, audio preferences, visual preferences, chat preferences and frequency, and even an idea of pleasing visual stimulus from characteristics of photos taken, mood via voice analysis

That is a ton of data (most of it from the Google device of course) and it doesn’t even begin to correlate the data between the different wearable gadgets. If these devices could talk to each other in some standardized way (Wearable Communication Protocol?) the amount of information we could put in use in our own personal lives would be astounding. For instance:

- Gain insight into what activities and visuals make us the most happy

- Gauge activity levels during a day following a certain sleep pattern

- Discover who you prefer to communicate with following meals, sleep, or exercise

- Determine the people who have the most positive influence on your life (very neat)

- Hand-eye coordination, hand-foot coordination

- Physical movement, fidgeting, and audio cues while using a computer (muscle movement in the fingers from typing)

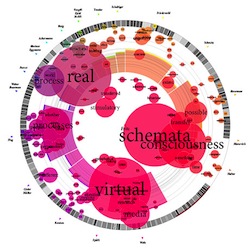

It all makes for an extremely impressive set of information which can be used for trending and forecasting along with comprehensive personal data visualization. This information can be used to make decisions, provide data to doctors or psychotherapists, help with posture, sleeping habits, pain management, activity levels, even social interactions. Knowledge is power as they say and personal data collection through sensor measurement has the ability to help us transform our lives.

It all makes for an extremely impressive set of information which can be used for trending and forecasting along with comprehensive personal data visualization. This information can be used to make decisions, provide data to doctors or psychotherapists, help with posture, sleeping habits, pain management, activity levels, even social interactions. Knowledge is power as they say and personal data collection through sensor measurement has the ability to help us transform our lives.

There are plenty of other things we could easily detect with modern technology: sweat levels, eye movements, breath depth, even muscle movements in the face to detect smiling and frowning. And who knows what the future will bring? Is there some way to measure caloric and nutritional intake without requiring the eater to jot down their meal? The more we can collect and store, the more we can crunch and analyze. No matter how you look at it, the real value of wearable computing is not consumer entertainment but consumer enlightenment.

Complex, dynamic biological systems such as human beings haven’t been amenable to electronic sensors outside of a hospital or other high-maintenance (expensive) setting. My father was trying to develop an affordable, reliable internal insulin pump for diabetics when he was a Howard Hughes fellow at Harvard in 1968. No one has accomplished that, even now, 45 years later.

In general, I believe that wearable computing and personal data collection devices are best for entertainment. There will probably be efforts to use them for privacy-invasive purposes. I don’t think that will be money well spent, as it isn’t likely to be accurate, not for awhile yet.

Fortunately, Oracle is sensible, and hasn’t gotten involved in this! Oracle products would be good for managing and accessing wearable device-associated data. That’s ethically distinct from facilitating design, development, manufacture and marketing of such devices. Just because Google does it, doesn’t mean it is the right thing to do.

I think that this highlights why these devices are finally achieving success: the companies making them know that without entertainment value they won’t get the data they want. It’s the new model that social sites like Facebook, Twitter, LinkedIn, Google, etc are prospering on. Offer a service to millions of people. These people think they are the customer. They think this service is for them. But little do they realize that they are the inventory. We enjoy the fruits of Facebook, share all, invite friends, and all the while our data is being used for the real customer: advertisers.

With wearable computing it has gone to a new level. Not only will people use it, they will pay to do so. Buy a Pebble watch and see Mario sitting on a clock on your wrist. Buy Google Glass and upload things you see to YouTube. All the while, Glass is getting information on places you’ve gone, pictures you’ve taken, things you’ve seen. It delivers fantastic entertainment at a price, all the while providing the manufacturer with invaluable data that they wouldn’t have gotten otherwise. I sort of covered that concept here: http://www.oraclealchemist.com/news/just-how-big-is-your-data/

Thanks for your insightful comments!